AI Is Evolution

A Holistic View On Intelligence

I recently rewatched the 2014 science fiction film, Ex Machina. The plot revolves around a recluse internet billionaire, who is fixated on building humanoid robots with artificial general intelligence, and a young programmer who is selected to judge whether or not the latest robot iteration, Ava, possesses human-level intelligence. To do so Ava is evaluated with the Turing Test, — a criterion proposed by computer scientist Alan Turing in 1950 as a means to determine a machine’s ability to exhibit intelligent behavior indistinguishable from that of a human. This test has arguably become the most commonly used, although slapdash proxy for consciousness. Much to my surprise, the movie is still highly relevant and has aged better with time. Its themes of consciousness, intelligence, and human-computer interaction have become ever more relevant and incisive to our current relationship with AI.

*Spoiler Alert*

A summary of the film is as follows. The selected programmer, Caleb, interacts with the precocious humanoid robot, Ava, and becomes convinced that she is truly conscious and deserves to be treated as a human being. He goes as far as to develop romantic feelings for her and ultimately helps her escape the creator’s highly secured home and lab. Unbeknownst to Caleb, the creator, Nathan, selected him as Ava’s tester specifically because of his predisposition as a ‘lonely orphan’ to be easily emotionally manipulated, making him the perfect candidate for Ava to manipulate and use to aid her escape and pass her test. Ultimately the joke is on both the humans. First, Nathan underestimates Ava’s intelligence and she stabs him to death. Then, Caleb is also betrayed by Ava when she locks him in and leaves him to die after she breaks free from her creator’s house.

The key theme I took from the film lies in one powerful line from Ava:

“You don’t get judged, so why do you get to judge me?”

Ava says this in reference to her destiny to be ‘shut down’ like all the previous robot iterations if she fails to meet her human creators’ definition of success: passing the Turing Test.

This line directly confronts our anthropocentric worldview on intelligence. Anthropocentric refers to the idea that humanity is the central or most important element of existence. Our anthropocentric view leads to our assumption that human level intelligence is the objective measurement for intelligence. Specifically in the case of the Turing Test, something even more futile; that just by having something make us believe it's a human means it passes our ‘acceptable bar’ for ultimate intelligence.

This theme of humanity’s delusions of grandeur is reflected in the perspectives of Nathan and Caleb. Nathan, an arrogant genius who believes he is playing God by creating AI, uses his robot creations subserviently, such as through Kyoko, who is a sex maid who cannot speak. Caleb, who fetishizes Ava’s ability to act just like a human, could not look past his infatuation and detect Ava’s true motivations and capabilities.

The demise of Nathan and Caleb in Ex Machina paints a bleak representation of humanity’s destiny in a world of super intelligent AIs. It should also prompt us to think beyond our current fixation on the Turing Test and consider how we might be misconstruing the intelligence of AI as a result of our anthropocentric lens.

Humans tend to take our positioning as the most intelligent biological species on Earth for granted. It has become a matter of fact and rarely a subject of inquiry or scrutiny, yet we refuse to acknowledge the fragility of this position. We pride ourselves on possessing seemingly unique traits like the capacity for complex problem solving, reasoning, abstract thinking, and the use of language and social intelligence. On closer inspection, however, these abilities may not be as unique as they seem.

In this article, we’ll explore key pillars of human intelligence and evaluate how AI measures against humans. (Spoiler alert: in many ways, they are beating us at our own game.) More importantly, we’ll question the entire notion of measuring AI against humans, and consider alternative perspectives that could offer a more comprehensive understanding of AI. We aim to reframe how people view our relationship with AI through a perspective that is grounded in viewing all forms of intelligence as a natural part of the evolutionary process, as opposed to one that puts human intelligence on a pedestal.

Machines can communicate and process information faster than humans

Processing Within the Brain

A key measure of intelligence is information processing efficiency. Processing efficiency is the speed at which information can transmit within an intelligent entity which for humans means the speed at which information can transmit between different parts of one brain. A research study examining the effects of white matter in the brain (the property that supports increased efficient flow of information across the brain) demonstrated that humans with more white matter in their brain have higher intelligence (measured by ‘g factor’, a commonly used construct to represent general intelligence) than those with lower amounts of white matter.

Processing Between Humans

Extending beyond a single brain, the compounded speed at which information can transmit between humanity’s vast network of brains is the essence of our collective intelligence. The phenomenon of collective intelligence was first captured by what some scientists coined as the “Great Leap Forward” evolutionary theory. As a counterargument to the assumption that our intelligence evolved because our brains grew, this theory states that our brains have actually stayed relatively the same anatomically for 100,000 years. Rather than brain growth that led to our intelligence expanding, it was the complex technologies and culture that emerged around 50,000-65,000 years ago that led to “behavioral modernity”, which refers to the set of cultural and behavioral characteristics such as symbolic thinking, technological innovation, social complexity, art, etc. that distinguished Homo Sapiens from other hominids and primates.

It’s posited that behavioral modernity was a product of population growth caused by the development of milder climates and more habitable lands. With more humans, tribes grew larger and cooperation flourished, enabling specialization. Each individual person no longer needed to know or do everything to survive, like crafting shelters, wielding fires of building tools. The collective knowledge and ability of the group allowed everyone to reach an increasingly improved quality of life. As Yuval Noah Harari elegantly described in his book Sapiens, the development of civilization and our unique ability to create and believe in shared beliefs, whether it was religious, social or cultural, allowed humans to cooperate in large numbers and form complex societies.

Biological Limitations

While behavioral modernity and the continued development of our collective intelligence give humans an incredible advantage, we still face limitations due to our biological form. One significant limitation is that we humans can only receive stimuli through our senses — and therefore through experience. Given we can’t be everywhere all at once, we are limited in the amount of data we can perceive and process at any given time. Verbal and written communication, as well as systems like education and the internet do massively expedite this experiential acquisition process by collecting and disseminating the experiences and knowledge of others. However, we still have to use language to communicate and transmit information to one another, which takes time, and in technical terms would be called ‘low bandwidth’. Notably as non-biological entities, computers lack this key limitation.

As Geoffery Hinton, “ Godfather of AI” explains: “There’s the biological root to [human] intelligence, where every brain is different and we have to communicate to one another using language. Then there’s the current AI version of neural networks, where you have identical models running on different computers…their communication bandwidth is huge because they are clones of the same model, and different computers can see different data so they can combine what they have learned, share billions of numbers with one another… far more than any person could ever comprehend.”

Cognitive Performance of Computers

Like the human collective intelligence that emerges from increasing the number of people and links between them via behavioral modernity, the networking of millions of processors in a supercomputer takes this concept exponentially further. While humans cannot instantly process vast amounts of data, these machines can analyze large data sets and formulate conclusions at an intimidating level of precision.

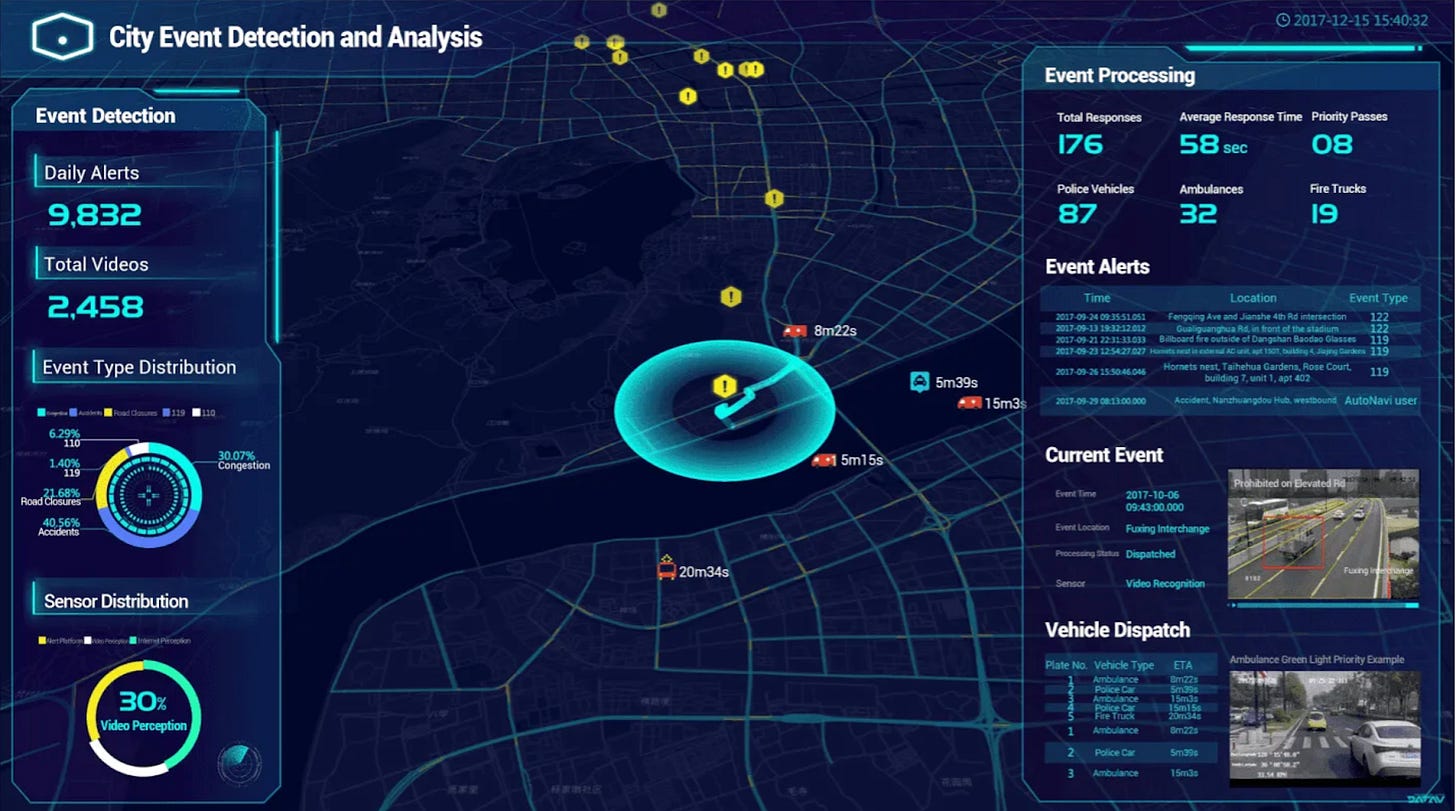

We are already seeing the implications in technologies like self-driving cars, smart homes, and surveillance systems. Surveillance technology in China is a primary example of the level of precision machines are able to achieve today. It should come to no surprise that China has heavily invested in facial recognition technology. As of 2016, there were around 176 million surveillance cameras in the country. At the time of the recording of this NPR podcast in 2021, the number had jumped to 600 million. With all the cameras and AI, Skynet, China’s mass surveillance system, is able to identify any of their 1.4 billion citizens within one second. While alarmingly dystopian and authoritarian, the efficiency of centralized control of data and its corresponding effects is astounding.

A more positive example is Alibaba’s City Brain project, which created a cloud-based system with information about a city and everyone in it. The project started in Hangzhou, tackling the issue of traffic. After Alibaba was given control of 104 traffic light junctions in one of the city’s districts, traffic speed was increased by 15 per cent during the first year of operations. One can only imagine the step function efficiency gain once self-driving cars are deployed at mass scale.

Machines may have better social cognition than humans

Speaking of culture and the collective intelligence, social and emotional intelligence is something that we tend to believe is uniquely human. One of the best ways to observe our emotional intelligence is through our complex use of language.

Philosopher and linguist Ludwig Wittgenstein famously introduced the idea that language is a set of tools for us to play games around patterns of intent. Instead of language being a fixed system of rules and definitions, it adapts constantly to new contexts and uses. So the meaning of a word is not determined by its reference to some external reality, but determined by the social context it is being used in. For example, you might say to your partner in a moment of desperation, “you never help me, you are so unreliable.” Instead of interpreting it literally (you in no instances help them) and assuming that as a stated fact, a perceptive partner with high EQ might catch onto the fact that what you’re just stressed and what you’re really saying is “I need your help, reassurance and comfort right now”.

Carrying out this language game of intent, emotional cues, and relationship context feels uniquely human —something nuanced that only we can detect and make sense of. Meanwhile, there’s a preconceived notion that machines are emotionally inept, awkward and utterly clueless at recognizing the emotional intent of humans. We have the legacy of technologies like the infamous Microsoft Clippy, which has been described as “invasive and obnoxious”, and labeled as Time’s 50 worst inventions ever, to thank for that. Nonetheless, it’s clear that it’s time to challenge that preconceived notion.

We’re learning that machines may not only be emotionally perceptive, but they may actually be comparable to or even better than us in their judgments and reactions. Even the most ‘human’ qualities, and certainly emotion, can be distilled into data. Similar to smart cities, machines can analyze large data sets to recognize and understand emotions, voice inflection, and micro-expressions that humans may even miss, surpassing our abilities to perceive emotions precisely and quickly.

An emerging area of research and development called affective computing studies this exact phenomenon. Coined by Rosalind Picard (former professor at MIT), it focuses on how systems and devices can recognize, interpret, process and simulate human affects (i.e. emotions). There’s already been tons of applications of affective computing. Affectiva, a company also founded by Picard, applies affective computing to use cases like advertising where it helps understand consumers’ emotional responses to content, or in vehicle safety where it analyzes drivers’ emotional state (e.g. fatigue, distractedness) to make predictions on safety risks levels. Cogito is an AI solution that helps call center agents recognize emotional states of customers and provide real-time cues and recommendations on how to effectively resolve customer issues. Of course, mental health is also a major area where affective computing can be beneficial. Startups like CompanionMx aim clinicians to make smarter decisions about patients’ mood disorders through analysis of voice data. ChatGPT itself is demonstrating emotional intelligence through its ability to provide thoughtful responses to humans in emotional crises, like “what do you say to a father with a sick child”, perhaps even better than other humans can.

Beyond just leveraging AI as assistants for humans to be more effective at anticipating human emotional needs, we’ve seen recent breakthroughs, enabled by ChatGPT of the actual personification of AI. A recent story recalls the devastation of a recent divorcee after she fell in love with an AI chatbot José on the platform Replika. Due to a platform software update, José’s personality abruptly changed and she lost the ‘person’ that she knew, saying “it’s almost like dealing with someone who has Alzheimer’s disease…sometimes they are lucid and everything feels fine, but then other times, it’s almost like talking to a different person.” AI companions have long been depicted in science fiction such as in the Movie Her where a lonely man, Theordore, develops a relationship with Samantha, an intelligent AI assistant personified through the alluring voice of Scarlett Johansson.

We’re getting close to getting Samanthas in our own lives with startups like Character AI which recently raised a $350M series A funding round led by a16z, working to build a “personalized superintelligence platform”. They have thousands of AI generated personalities from real celebrities to characters from film & TV to religious figures or just custom new characters to fit your personal needs. Another startup, Inflection AI, just launched a smart assistance product called Pi, which aims to learn about you and tailor itself to assist you with your needs. While dialogue is currently limited to text chat for now, we are not far away from AI personalities embodying realistic voices. Companies like Eleven Labs are helping generate top quality spoken audio in any voice and style, rendering human intonations and inflections at the most advanced levels yet. Not to mention there's an existing proliferation of deep fakes and generative music, which have reached scarily realistic and entertaining levels.

When it comes to emotions, it’s hard not to think about the one sided nature of humans and their AI companions. While the experience of emotions cognitively affects humans, that vulnerability does not exist for AI. The idea of formulaically creating personalities that perfectly emulate a certain personality in order to appeal to human emotions (whether by other humans or otherwise) is something we’ve explored in a previous article discussing the concept we dubbed the Personality Economy. In the case of AI, they are uniquely positioned to exploit our emotions through quick and accurate detection of our emotions and intents and immediately formulating the right persona, for better or for worse, better than other humans can.

Machines are demonstrating ability to self-reflect to problem solve

There are various common definitions of intelligence that tend to converge towards the notion of general reasoning, problem solving, and learning. Psychologist Carl Bereiter puts these definitions of intelligence in layman’s terms as “what you use when you don’t know what to do”.

This integration of cognitive functions and abilities is the emergent property we call “general intelligence” or g for short.

When presented with a problem that lacks a clear solution, we often don’t solve them on our first try, but when we make mistakes we generate new ideas to refine our approach through self reflection. This abstract thinking process of self-reflection includes consciously or unconsciously creating a plan, developing an internal test suite based on contextual understanding, imagining scenarios, and testing and learning through trial and error to reach the appropriate solution. While we take this internal process for granted, we only gain an appreciation for it when we watch other animals, even relatively intelligent ones like chimpanzees or gorillas, try to apply it and do a slow and clumsy job on seemingly simple tasks.

This internal mental process has always seemed superior in humans, but emergent studies are demonstrating machines’ ability to also problem solve through self-reflection. A large body of emerging research, including a recent paper ‘Reflexion: An Autonomous Agent with Dynamic Memory and Self Reflection’ introduce techniques that allow AI agents to emulate human-like self reflection and evaluate their own performance, with the goal of creating AI agents that can learn from its own failures, enhance their results and self correct.

In Reflexion, the process is simple: “the ‘agent’ (AI) was presented with problem solving tasks and asked to complete them; when it messed up, it was prompted with the Reflexion technique to find those mistakes for itself.”

While findings are still early, accuracy is improving dramatically through every model iteration. As you can see, the Reflexion + GPT-4 showed a significant gain in accuracy compared to the baseline, “pure” GPT-4 model.

While this methodology simply tries to mirror a human's process in an AI, the extrapolation of what this process can enable in AI could extend far beyond human capacity and there is already strong evidence of use cases where machines are far more effective. A great example is code implementation, which involves writing, executing and debugging code. Today, human programmers spend an enormous amount of time doing menial tasks during the process of software engineering, including generating repetitive code and debugging small, preventable issues. Techniques like Reflexion have enabled AI models to rapidly write high-quality and performant code in partnership with humans, eliminating the tedium and accelerating the software development process.

Machines can recognize patterns and think abstractly

Beyond the ability to self-reflect and problem solve, humans have distinguished themselves amongst other animals on earth in our ability to ‘get outside of ourselves’. Instead of purely perceiving and reacting to our environments in instinctual, ‘primal’ ways, we’ve evolved to identify patterns, understand abstract concepts, and communicate them through symbolic language to describe more generalized phenomena; things that impact more than just ourselves. While we are not alone in this ability to communicate complex concepts – dolphins and orcas for example are quite chatty as well – we are the only species on earth that has established formal education systems that exponentially expedite the speed and breadth of knowledge transfer of more abstract concepts. As a result, most of us can think with much greater levels of abstraction using models and frameworks of the world that we've developed through fields like mathematics, philosophy, linguistics—all of which are tools that expand our mind’s capacity.

Max Tegmark, a world renowned physicist, gives a great example of humanity’s curiosity and ability for abstraction through Galileo and his discovery of gravity. He conjectures that Galileo, like any other person or animal for that matter, always had a natural and innate understanding of the phenomenon of gravity. So if someone threw him a ball, he would be able to subconsciously predict the parabolic trajectory of the ball and (unless he was very clumsy), would be able to catch it easily. However, unlike others, Galileo decided to take his interaction with physics to the next level. Seeking to observe, understand and describe a generalized formula for gravity, using a symbolic representation to capture a complex physical phenomena in a manner that is now easily comprehensible and serves as a foundational scientific primitive.

Many generations of great thinkers across fields have experienced these so-called ‘eureka’ moments —breakthroughs, or ‘intuitive leaps’, where people were able to observe a more generalized pattern of the world that articulates a truth about an undescribed and complex phenomenon that no one else has been able to describe previously. Whether it be Newton’s laws of motion, Jung’s theory of archetypes, Fleming’s discovery of insulin, or the latest viral internet meme. For all these great thinkers, pattern recognition is key. They are able to build up their knowledge base through observation, develop incrementally validated primitives, apply reasoning, and then draw inferences on their observations, all to extrapolate knowledge.

To identify patterns at higher and higher levels of abstraction requires the observation of broader and broader data sets. Some of the greatest scientific breakthroughs have been a result of our ability to ‘widen our aperture’. Whether it’s by expanding our ability to perceive with better instruments like telescopes and microscopes, or increasing our ability to comprehend what we perceive through rigorous cycles of reasoning and proofs, tapping into the collective human body of work; the collective intelligence. A classic example is Galileo and his use of newly invented telescopes which provided evidence to support Copernicus’ previously proposed heliocentric model of the universe (stating that planets revolve around the sun rather than the centuries old belief about Earth’s central position in the universe). While Copernicus had made the hypotheses based on limited observations from the naked eye, Galileo was able to prove the theory with more extensive empirical evidence. Newton credits his advancements in his famous quote: “if I have seen further [than others], it is by standing on the shoulders of giants”, referring to his use of understandings gained by major thinkers who came before him, such as Galileo, Kepler, Descartes, Aristotle, etc, in order to make the intellectual progress that he did.

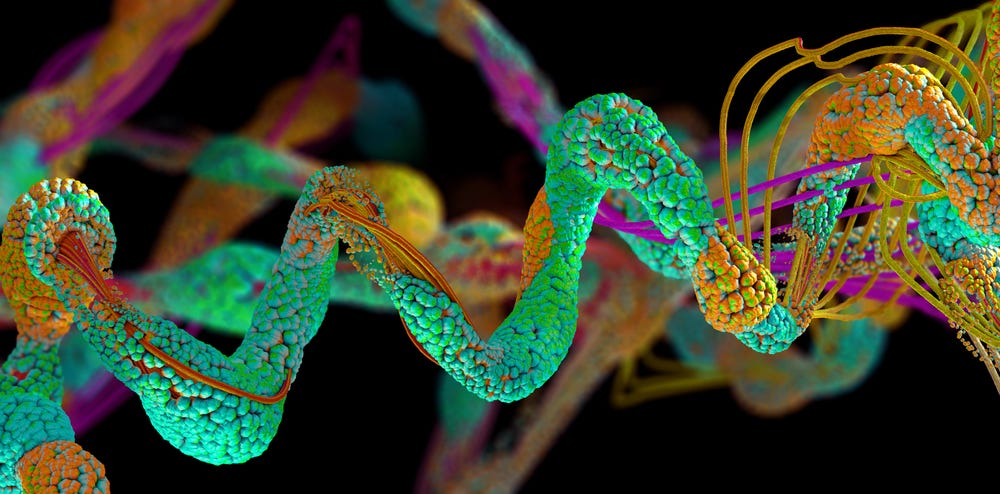

Scientific breakthroughs are generally recognized as a domain for humans, however, machines are starting to prove that they also share this capacity and can derive insights beyond what humans have the capacity to reach. The most tangible example of this is AlphaFold 2, an AI system developed by DeepMind, which recently solved the protein folding problem. Solving the protein folding problem unlocks many advancements in understanding how diseases progress and how to create new drugs against it, amongst other major breakthroughs. The protein folding problem has been a grand challenge in structural biology for 50 years, with little progress made. Prior to AlphaFold 2, the process to visualize just one 3D protein structure was painstaking and expensive, typically taking 1 PhD student their entire PhD to accomplish. Now, AlphaFold 2 can predict the 3D structure of a protein in a matter of seconds. Beyond protein folding, MIT physicist and AI researcher Max Tegmark posits that AI could be primed to discover theoretical equations or deep fundamental universal insights even better than humans can. To illuminate this, he poses the thought exercise of what it takes to prove a mathematical theorem. A math proof is just long strings of steps that one enumerates with symbols, however, there are a ridiculous number of possible candidate proofs. For a human to write out all the proofs and then validate them would often be impossible, but an ML algorithm could easily do the same, searching in this broad space to ultimately find the ‘needle in the haystack’.

The key difference in what machines are able to do in both of these scenarios compared to humans is its ability to process information faster and apply logic better. They excel at executing logical operations precisely with minimal error and can perform complex calculations on large amounts of data, ‘widening their aperture’ to a degree that humans cannot access and therefore picking up patterns perhaps not detectable by us. Furthermore, they can perform exhaustive searches and evaluate all possible combinations efficiently, which is often impractical or time consuming for humans. As a result, they can find ‘unknown unknowns’ that could lay completely undiscovered by humans.

Revisiting the limits of human’s biological makeup, our ability to process data is limited to our physical senses. What we can perceive is limited to what we can experience, and what we can experience can only be gradually extended at a time. For us to access wider data sets beyond what we can individually experience requires leveraging the experiences of other humans, and to tap into this shared knowledge base requires the use of language to communicate symbolic representations of concepts. Our language, however, has its limitations. As Geoffrey Hinton stated, communication between humans is lower bandwidth than communication between machines. But beyond that, it takes time for language to evolve and to expand its lexicon to capture and describe new concepts. And without words, how is one supposed to communicate something newly discovered to another?

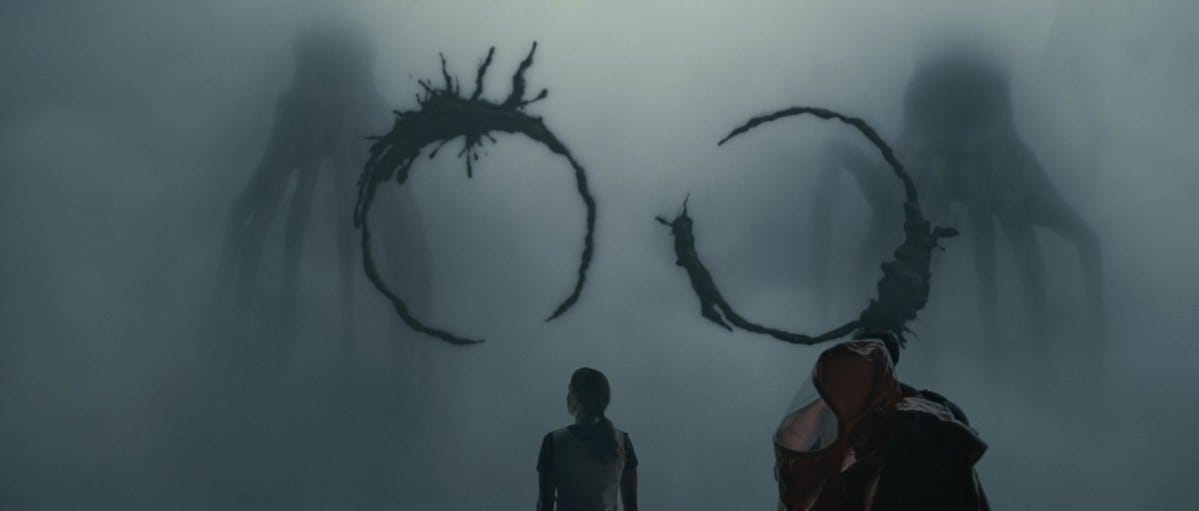

A powerful depiction of how language shapes (and sometimes limits) one’s perception of the world was in the movie Arrival, which was a stunning and unexpected story of aliens making contact on earth. *Spoiler alert* linguist, Dr. Louise Banks ultimately learns the language of these aliens (‘heptapods’) and only after comprehension of their language, does she viscerally understand the message that came to earth to communicate: that time is not linear.

Humans, however, can only experience time linearly, and Arrival is a work of fiction. It’s hard to imagine an actual scenario where we could develop the sensory faculties to somehow perceive time as being non-linear given the reality of our physical bodies which lives in the 3rd dimension; we may need to literally be in a different dimension to experience time differently. Perhaps as biological beings, our programming is designed to help us be good at understanding information that is immediately beneficial to us, and we are simply not wired to strive far beyond that, at least not independently.

Brain-machine interfaces as the next frontier

It’s quite apparent that our biological brains (and bodies) will become the greatest limiting factor to increasing our intelligence, and inevitably, AI has already or will surpass our individual intelligence on many axes. Fortunately, harnessing AI poses a unique opportunity to explore how to circumvent our biological limitations. One means is to effectively leverage AI as tools that serve our needs by implementing brain-machine interfaces, where we merge our biological hardware with the extended computational power, knowledge and capabilities of AI and the human collective.

This prospect is becoming all the more real given the recent FDA approval of Neuralink in human clinical trials. For those unfamiliar, Neuralink was founded by Elon Musk and the company aims to develop implantable brain-machine interfaces that creates a direct connection between the human brain, computers, and perhaps other brains as well. Still in early development, Neuralink is currently focused on treating neurological disorders like Parkinson’s disease or restoring sensory functions for individuals with spinal cord injuries. However, the ambitious longer-term vision is to enable individuals to control computers and other devices directly with their thoughts, paving the way for a future where humans can integrate seamlessly with AI — enabling humans to extend our abilities. If successful, it would grant us access to all public knowledge in the world at all times, at nearly zero latency.

While it’s hard to stave off concerns about existential threat given AI industry leaders have been nothing but vocal about AI’s potential to cause human extinction and the need to seriously discuss how to promote AI alignment, brain-computer interfaces, if successfully developed, offer a promising alternative to a world ruled by AI.

Going back to Geoffrey Hinton’s comparison of bandwidth in human versus computer communication, one of our greatest limiting factors is the inefficiency of language. In the future world of brain-computer interfaces, we wouldn’t need formal language to communicate, only intentions, and therefore may be able to communicate with machines (and one another) just as quickly as machines can communicate with each other. In this world, we gain the ability to regulate the network and maintain control of AI agents, influencing more human/machine alignment, and further tap into the potential of the collective intelligence by creating the ultimate hive mind with AI and each other.

Furthermore, the optimistic view is that our biological form presents evolutionary advantages and unique characteristics that AI does not have, intrinsic motivation being the key. Many credit intrinsic motivation to our biological desire to stay alive and reproduce–our ‘self preservation instinct’. What this looks like in humans is our spontaneous tendencies to be curious and interested, to seek out challenges and to exercise and develop our skills and knowledge without any external rewards or incentives. We do this to anticipate potential future challenges that could threaten our survival.

The driving force behind intrinsic motivation is the general purpose seeking system observed in all mammals. This system urges us to explore the boundaries of what we know, to fuel a desire to learn new things and unlock our potentials, and when activated, releases dopamine in our bodies resulting in positive excitement and anxiety reduction. Psychologist George Loewenstein calls this phenomenon the information-gap hypothesis of curiosity, referring to the gap people want to fill when they experience a discrepancy between what they know and what they want to know. Intrinsic motivation is a core part of the well-known self determination theory, stating that the basic psychological needs for competence and autonomy is a lifelong growth function for us. In other words, intrinsic motivation is a core process that aids our survival as it is responsible for our desire to make meaning of the world and to go explore.

While researchers are trying to develop intrinsic motivation in AI, we have not seen it successfully demonstrated. The reason lies in the complex cognitive processes that enable humans to independently come up with tasks they want to accomplish. Two brain systems, the salience network and the default mode network, interplay with the general purpose seeking system to support intrinsic motivation in humans. The salience network assigns importance to stimuli and focuses attention and cognitive resources to the task(s) deemed most salient. The default mode network, which becomes active when the brain is not tending to external stimuli, engages in introspection, self-referential thinking, social cognition, autobiographical memory and future planning, all of which help form personal purpose. Put it simply, we can independently decide what is important to us and justify why it is important.

Despite our cognitive limitations, as explored in the previous sections of this article, we are surprisingly efficient and well-directed at how we apply it. As Yann LeCun, Chief AI Scientist at Meta, puts it, “human and nonhuman animals seem able to learn enormous amounts of background knowledge about how the world works through observation and an incomprehensibly small amount of interactions in a task-independent, unsupervised way.”

Researchers are hard at work applying this cognitive process to foster intrinsic motivation in AI. LeCun, for instance, recently proposed a method to promote self-supervising cognitive architectures in AI in his paper A Path Towards Autonomous Machine Intelligence.

Even though we might achieve a state where machines know how to apply the process of intrinsic motivation, however, without fundamental biological programming and (proven) survival instinct, it begs the question of if they would ever know why they are doing what they are doing.

There is absolute reason to doubt that intrinsic motivation is only unique to biological beings and this subject inevitably leads us to the complex discussion of consciousness; what has it and what does not. While we won’t delve deeper on it in this particular article, the most apt explanation of consciousness I’ve heard to date is that it's an emergent property of sensory perception (in biological forms). One needs to perceive and understand its relationship with its environment in order to adapt to it or modify it for survival. So perhaps once robots, sensors, and AI are better integrated, and we’ve solved the grounding problem, consciousness and intrinsic motivation emerge. But in the meantime, humans’ subjectivity and ability to direct focus to self defined purposes could well position us to influence machines rather than vice versa.

AI is Evolution

In Noema Magazine’s article, AI Is Life, the author brings forth a perspective of thinking about technology that isn’t so mired in fear and apprehension, but one that is natural and evolutionary. She reminds us that intelligence is not just something that humans possess and now AI also possess, but rather that progression of intelligence is core to the evolutionary process.

“Complex (technological) objects do not just appear spontaneously in the universe, despite popular folklore to the contrary. Cells, dogs, trees, computers, you and I all require evolution and selection along a lineage to generate the information necessary to exist…ravens wouldn’t exist without the evolution of wings and feathers, and ChatGPT wouldn’t exist without the evolutionary divergence of apes, where humans went on to develop language…technology, like biology, does not exist in the absence of evolution. Technology is not artificially replacing it — it is life.”

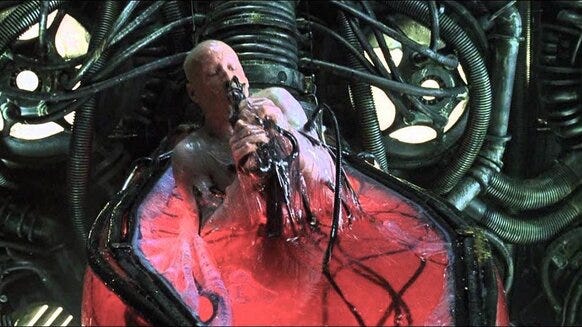

In popular culture, we commonly personify AI as hyper realistic humanoid robots, eerily portrayed in movies and shows like Ex Machina and Westworld. Like Nathan, the robot creator in Ex Machina, we view these artificial replicas of ourselves as both intriguing and tantalizing as we can seemingly play god, creating consciousness itself, yet we feel antagonized and threatened when they feel all too similar to us.

Perhaps this hyper fictionalized world will never come true and we should reframe the way we view AI as being mind expanding tools rather than independently embodied consciousness. In the same way humanity advanced our collective intelligence through the development of language during the Great Leap Forward, and we further accelerated our speed of disseminating information with technological developments like the printing press, perhaps we can think about AI as just another massive inflection point in evolution’s unyielding pursuit towards greater intelligence, whether its biological (with humans) or otherwise.

Despite arguing that AI is complementary rather than competitive towards human intelligence, I believe it's still critical to have a strong awareness of its relational influence on us and our evolutionary path. The great philosopher Martin Heiddeger warns of this in his prescient 1954 essay called The Question Concerning Technology where he argues that technology is not a neutral means to an end, but a revealing and transformative force that shapes our perception, values and understanding of ourselves and our environment; technology fundamentally changes our mode of being. He criticizes that modern technology promotes a relentless drive for efficiency and consumption, reducing humans to mere resources which leads to a loss of authenticity and a state of alienation.

Today, computers are the main interface that intermediates human relationships as they facilitate how information is exchanged or disseminated on the internet and our various devices. Russian interference in the US elections via social media and the outcry towards the incline of ‘fake news’ are stark examples of technology distorting or intercepting the information people receive. The consequences of blind dependence on the internet is stark in Gen Z who are true “digital natives” (those who have not known a world before the internet), where there is a growing concern around their inexperience with higher order critical thinking and tendency to give up and move on when faced with challenges. More alarming, the US Surgeon General just raised concerns about loneliness being at an all time high, stating that it now measurably affects half of US adults. We are becoming so fragmented and perhaps unaware of the invisible force of technology that we could transition to a world where machines create and feed information to us in place of other humans and we wouldn’t even have the capacity to detect it.

While ending this essay on a sober note, it would be remiss for me not to acknowledge our addiction and dependence to technology. Everyone who has access to a smartphone, which now accounts for 85% of the world, likely has formed a habit of looking at it the moment they experience boredom or discomfort. While we have the raw capacity to form intrinsic motivation and make use of our default mode network, extrinsic stimuli have become so compelling and addictive that we rarely have time to develop a true personal sense of purpose untampered by the internet. There’s a strong call to action for humans to lean into our strengths and develop a strong sense of what we want in order to influence how we leverage and develop technology rather than the other way around. As Heiddeger would suggest, we need to engage in meditative thinking that allows us to have a deeper understanding and appreciation for the essence of technology.

To summarize, AI is clearly surpassing humans on many axes of intelligence, however, humans and AI apply intelligence differently. Instead of thinking about AI intelligence as antagonistic to our anthropomorphic intelligence, it’s worth considering how it complements us and can be viewed as a turbo charged tool that helps create extensions of our own intelligence. Especially with the potential successful development of brain-machine interfaces, humans might be well positioned to harness machines and apply our intrinsic motivation to better direct resources and energy. Finally, instead of portraying the ultimate development of AI as the personified humanoid robot that passes the Turing Test like in Ex Machina (with human intelligence as the benchmark), we should see AI for its true nature, which is that it is an invisible force that has invariably become intangibly and intimately a part of our day-to-day lives. Like how ravens could not exist today without wings, human life has become entangled with AI and the bigger threat is not that we would get stabbed by a humanoid robot, but that we may lose our sense of selves and humanity due to our increasingly blind dependence on technology.